*NOTE: Servers typically have IPMI and ECC.

You got some old hardware lying around, so wouldn’t it be great to put it to work? Is it really possible to build a 500 dollar server? Well… this beast, Blackthorne, is the result. Fully operational, but possibly the worst combination we could have chosen, so find out where we screwed up, so you save money. You’ll need to consider what connectivity you need. How many PCIe slots will be used? And most importantly… do I even need this? What the heck were we thinking?

Today we’ll answer those, and your support helps us make better content like this, so please take a quick second to follow us on Instagram, Facebook, n Twitter, and subscribe to our YouTube channel, all that good stuff. If you have hardware questions leave them in the comments and we’ll try to answer them, any updated info on this video will be on the techspinreview.com companion post. And next video we’ll have a follow up on a cheap NAS build using new hardware. Let’s get to it.

Quick: How to Build a NAS

For a NAS, the hardest part will be finding a PC case with 8 to 10 hard drive bays, though some rackmount cases can be modified to run quietly, at a cost. The newer the motherboard and CPU, the better for your power bill, as operating costs will factor into the total money you spend on this. Choosing motherboards with onboard graphics solves the need to run in headless mode, info on that is challenging to find.

Your motherboard will need 2 to 3 PCIe x16 slots, and Intel boards generally have more PCIe slots, for a SAS to SATA card to connect up to 16 hard drives, an NVMe adapter if your board’s old, and network expansion like 10 or 40 gigabit Ethernet. You’ll need 16 gigs of ram, don’t buy DDR3 as you can’t carry it forward. A cheap small NVMe m.2 drive is perfect for cache, for older boards without a slot, cheap $10 PCIe adapter cards exist, though you can use an SSD also. For more hard drives than your motherboard can handle, you’ll need a SAS to SATA card that’s in IT mode, SAS breakout cables, and SATA power splitters.

Refurbished NAS Hard Drives are cheap on Amazon, and at 9 watts/2 amps a drive at startup, if you spread the load on the PSU wiring, a 500~600 watt PSU should by fine with no graphics card. However do research as drive idle power should be close to 1 watt, old drives can use a lot more, ramping up operating costs quickly. Finally, case fans for cooling, and you’ll need internet on another PC to grab UnRaid. UnRaid gets installed on a 1 gig USB stick or better which remains always plugged in, and UnRaid requires an internet connection to your new NAS to setup parity and run the array, and we’ll leave any UnRaid questions below to the experts.

Not factored in the cost- there’s the UnRaid license, any file-syncing software, and importantly a UPS, as you don’t want it hit with power surges, and if you lose power, re-building your array will take a VERY LONG TIME, a 4TB parity can take around 9 to 12 hours. Finally, your NAS may need backup, cheap large external hard drives can stay offline until you’re ready for your nightly or weekly backup.

Parts needed to build a NAS:

PC Case(+10 HDD bays)

Motherboard: 2-3 PCIe x16

(onboard graphics/headless)

CPU +cooler

SAS to SATA card (IT mode)

PCIe NVMe m.2 adapter?

10Gbe/40Gbe networking

16GB of RAM(don’t buy DDR3)

SAS to SATA breakout cables

SATA power splitters

Refurbished NAS HDDs

500~600 watt PSU

Case fans

Internet to download UnRaid

1GB USB 2.0 stick

Internet to NAS

Short-term needs:

UnRaid license

File sync solution like AllwaySync

UPS to protect server

External HDDs for backup

1) The NAS Case is the hardest

We’re building a NAS, a Network Attached Storage device, which is basically a hard drive on your network that you can save files to, just like an internal hard drive. They can be small… or BIG. Putting a server together on a budget depends on how complete of a PC you already have and how much storage you need. Old rackmount servers -can- be a good deal, but the noise they make means they’ll need to find a home outside your living area. Silencing some is possible, but adds to cost.

The case will probably be the most difficult choice, anything server with lots of hard drive bays will be expensive. 95% of PC cases won’t have enough bays, but really try to find 8 to 10 bays that fit your motherboard, side access is best, with rubber drive mounts to minimize vibration. Drives in-line with the case will make your job hard, with motherboard or wiring making back access tricky and likely poor front access with case metal or fans blocking the way, and you need fans. 5.25 hot swap bays look cool, but they’re 90 bucks a pop.

Cheapest option we saw is a CoolerMaster N300 or N400, though drive installation with an ATX motherboard in may be hard. DIYmediahome.org has a list of old huge bay cases, though a great one is the ThermalTake Core V71 which was 170 dollars, but all these are discontinued, so good luck. We’ll cover a great new case next episode.

From sponsored links and as an Amazon Associate, we earn from qualifying purchases. Learn more

Please use our affiliate links for the Cooler Master cases at

AmazonUS: https://amzn.to/3V6LUfW AmazonUK: https://amzn.to/3W6Wx3n

AmazonCA: https://amzn.to/3W8SoMy AmazonIN: https://amzn.to/3V4OLpv

2) Intel or AMD motherboard for NAS

We researched and picked only cards and wiring off EBay; buying used, look locally on Facebook marketplace or similar for complete systems, as the parts already work together, saving time and money on parts hunting. The key will be, how do we keep things fast for now, and have expand-ability in the future. I can hear it already in the comments, nice cable management bro! I’ve got sleeved extensions show but they won’t be in the finished build. Let’s keep it cheap.

We got this whole unit for 130 bucks, but we screwed up. This board is an EVGA Classified SR-2 from 2010, and it’s probably the worst pick for a server build, but great for a demonstration of what not to do, eh? So you’re looking for a motherboard with 3 PCIe x16 slots and onboard graphics using a low wattage CPU, which is what makes this SR-2 and dual CPU combo a horrible purchase, we’ll touch on this later.

Intel CPUs often have integrated graphics on chip enabling motherboard video out, but mainstream AMD CPUs often don’t. That means no video out from your motherboard if it has VGA or HDMI. One advantage AMD does have is unofficial support for Error Correcting or ECC unbuffered memory on their consumer CPUs, you just need to find a capable board, and if you’re gonna use the ZFS file system, this is recommended.

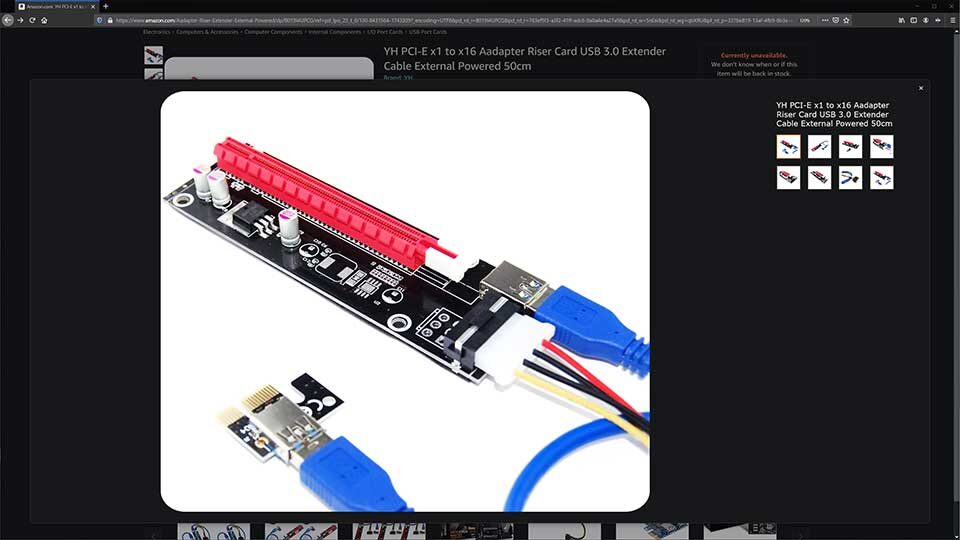

This SR-2 supports headless mode, which is booting without a GPU installed, though a lot of motherboards won’t, a solution is a cheap $10 PCIe riser that’ll plug into a PCIe x1 slot so you can use an old long slot low wattage graphics card. You can Dremel out the right side of a PCIe x1 slot and the card will work, in the way components and motherboard damage are issues, so do you wanna chance it?

Why do you need 3 PCIe x16 slots? For one or two SAS to SATA cards, old boards won’t have NVMe, and the last for 10 gigabit or higher networking. Keep in mind some boards disable PCIe slots depending on your configuration, and some won’t have BIOS options to enable all slots. If you find a sweet motherboard, drop it in the comments and we’ll look at adding it to the techspinreview.com companion post.

3) RAM, NVMe, HDD, PCIe for NAS

You’ll need RAM, since we’re using UnRaid, 4 gigs is enough, although if you want to run VMs, then figure 16 gigs or so for each VM, just don’t buy any DDR3. If your CPUs are using stock coolers great, just clean them first, but if they’re noisy you could use a CoolerMaster Hyper 212.

Old boards won’t have an NVMe m.2 slot, but with a cheap 10 dollar PCIe to NVMe adapter, you can use a stick lying around for server cache. These circuit boards just have traces connecting the m.2 port to the slot. Now you won’t be able to boot from it or maybe even see it in Windows, but UnRaid saw the drive using either adapter. By the way, rear slot covers aren’t necessary and may not fit properly, don’t force it, just remove it.

You need NAS drives for a NAS, as they’re built to run 24/7 and tolerate vibrations from adjacent drives which are installed properly using all four screws, and hopefully with rubber shockmounts. Don’t use normal desktop drives as they’ll die quickly in a NAS. Browsing Amazon’s refurbished listings may get you up and running, just research each drive’s idle power draw, which should be 1 watt or a hair under.

Most motherboards can probably connect 6 hard drives, but for more, there’s PCIe to SATA 2-port or 4-port cards, but there’s a better option. Considering a SAS breakout card supports up to 8, yeah, 8 drives, we got an LSI SAS HBA Fujitsu D2607-A21 pre-flashed into IT mode, just 65 USD.

Each one of the mini-SAS ports handles 4 hard drives, and it’s pre-flashed so you don’t have to mess around for hours in Linux. We grabbed Mini SAS male to SATA breakout data cables, and a few SATA power male to 5-port SATA female cables.

Finally if your case has a 5.25 3-bay spot, these cage racks come with rails and a fan mount, which is essential. This 30 dollar solution has no rubber shockmounts and 3 mil spacing between drives, you may want to leave out the center for ventilation. Do NOT run hard drives without fans on them, we’d prefer a fan up front but our Xeon intake fan is just 4 centimeters behind so what can you do? Embrace the jank.

4) NAS Fans, PSU, Networking

Your case needs cooling, and Arctic F12-120’s are the budget go-to at 10 bucks, 7 on sale sometimes. But at 14 bucks, Noctua’s new non-poo color grey-toned NF-S12B redux-1200’s deliver great airflow with low noise. They also have the NF-P12 redux, high static pressure fans great for radiators and getting air through cramped spaces. For glass panels, CoolerMaster has 3-packs of 120 mil SickleFlow or Halo ARGB fans for $50 and $65 dollars.

From sponsored links and as an Amazon Associate, we earn from qualifying purchases. Learn more

Sponsor- Please use our affiliate link for Noctua Redux Fans at

AmazonUS: https://amzn.to/3gV0dnk AmazonUK: https://amzn.to/3xHz0KK

AmazonCA: https://amzn.to/3tbOrHC AmazonIN: https://amzn.to/33aFu6Z

A NAS power supply should be in good condition, 500 watts or more is fine, and we won’t be using a graphics card. Re-using an old PSU, check it to make sure there’s no rust anywhere. At power on, hard drives can momentarily peak at 9 to 10 watts at 2 amps, before normal power draw, so for 10 drives budget 100 watts and 20 amps, which you can balance over your SATA and molex wiring. If you’re lucky and have a gigabit network port, you’re set for now, and in the future you could grab 10 gigabit RJ-45 cards to take the easy out, or 40 gigabit Mellanox Connectx-3 Pros or better using QSFP which will scale better.

Finally, if you’re lucky your old board may have gigabit connectivity, and that’ll be fine for now. If it’s just 100 megs a second, a 1 gig network card is 15 bucks, a 2.5 gig card is 30, and 10 gig for 95-ish. These are all using standard ethernet cables, but you can pick up used SFP+ 10 gig optical for half that, though you’ll need to buy and setup an optical network, good for equipment not in the same room.

5) Data sync, UPS and Backup

Our studio gigabit network will get an upgrade in the future, for now we’ll pull our current project from the server directly to an editing PC’s NVMe drive giving ultra fast scrubbing for footage. Even though UnRaid should put the project on the server cache, this cuts out network lag and server drive response times, we’ll test this soon. To update server files we’re using a program called Allway Sync, so as we edit in Premiere using the editing PCs’ NVMe to scrub, changes are pushed to the server.

Allway Sync has been reliable, I’ve used it 8 years to sync 267 gigs of MVs and music for DJing, and the last 6 for full hard drive sync between PCs, currently 4.8 terabytes of data, 190,000 files including Techspin assets. Allway Sync is amazing but there’s a caveat, if you do changes on both machines without sync-ing you’ll sort the discrepancy, though you can setup auto-handling rules. But if it’s running all the time on an editing machine and set to auto-sync every minute there should be zero issues. A license is a 26 bucks, 16 for a second one. Totally worth it.

Next, get a UPS to protect your server, and get it soon. We did an episode with CyberPower, link up here, covering options and runtime from various capcities, or choose another brand, just get protected. If you need to redo NAS parity on the array, that can take from 10 to 14 hours for each 4 terabytes, 12 terabytes or more you’re looking at a full day. Can your business handle that down time? Get protected.

If you’re not using your NAS strictly as backup, then plan to also back up the files there too. How do you back up a 20 or 30 terabyte drive? Well who’s got two thumbs and sucks at Linux? THIS GUY! So we’ll use Allway Sync to grab the data off the server and push it to the external hard drive… we’ll get more later. This is probably easier than setting up rsync? If you find an easy UnRaid array backup guide, tell us and we’ll add it to our website post.

Our 10yr old Build

Before we bought anything, what do have on hand? Our oldest Asus P8Z68-V LX Core I7 2600K has two PCIe, but it’s 100 meg network port got zapped, that was out. Our MSI B360 Gaming Arctic is in our Media PC, link up here for the CoolerMaster TD500 Mesh build we did with that, had NVMe and two PCIe x16, but we didn’t want to rebuild this PC. Our secondary editing rig is an MSI X270 XPower Gaming with i7-7700K, with 4 PCIe x16 it’s perfect but has a cold boot bug which takes two or three starts to get it running, so maybe later. Amazingly, Facebook marketplace was where we struck gold.

We lucked out, a fire sale! But it’s a 10 year old motherboard, not THAT far out. So we picked up an EVGA Classified SR-2 with Dual Xeon X5650’s at 2.67Ghz with coolers, 40 gigs of HyperX DDR3-1600 memory filling all slots, two EVGA GTX 580 graphics cards and an AMD FirePro Graphics V7900?

I’ve barely even heard of FirePro, let alone seen one. Power’s from a SilverStone ST1500 watt modular power supply, a beast, all inside a Lian Li PC-V2120X case capable of holding the SR-2’s huge HTPX form factor. Some Techspin lovin’ to clean it all up, and we’re good. This would’ve cost over 5000 bucks in 2010 money, parted out, maybe worth $1200 dollars, and we got it for a steal of just $110 bucks.

Plugging in the graphics card we lost use of PCIe slots 3 and 4, which does happen, and couldn’t get them working in BIOS either. Slots further down had no issue, and no trouble with the NVMe and LSI SAS cards. From Amazon we got three HGST 4 terabyte and five HGST 3 terabyte NAS drives, all refurbished, and we don’t recommend them, we’ll find out why in the cost/power draw section. A couple of spare NAS drives got thrown in, too.

TIP: Drive info

A huge time saving tip for you: I’ve added info including the last four of the serial number on electrical tape to the drive backs. Since UnRaid lists your drives by serial number, this info saves lots of time troubleshooting drives with SMART errors, getting too hot, and wiring issues. About 10 minutes taping info for all drives saves hours.

UnRaid. We hit $500!

You’ll need another PC to download UnRaid and install it on a USB drive, minimum is 1 gig, and USB 2.0 can be more reliable. We’re using a SanDisk Ultra USB 3.0 16GB which is overkill. Plug in the USB stick into the rear motherboard I/O, and boot, don’t miss the GUI startup selection. If BIOS completes and there’s just a blinking cursor top left, check in BIOS that your USB is set as the first device in boot order. By the way, the SR-2’s boot to UnRaid network usability was 2 minutes 5 seconds.

Using UnRaid, your biggest hard drive has to be your Parity drive. Since our biggest is 4 terabytes, we’ll lose that out of the array, but the usable size is 32.5 terabytes, not too shabby. The UnRaid lifetime license is 60 bucks for 6 storage devices, 90 for 12, and 130 for unlimited, and the Parity and cache drives count towards this, though you can upgrade a tier for cost plus 10 bucks.

NAS total Cost, Power Draw

So 110 for the whole case, 390 for all the drives. 500 bucks! If you downscale drives a bit you can still get UnRaid and hit 500. Additions were the PCIe to NVMe card for 10 bucks, LSI SAS card was 60, two SAS to SATA breakout cables at 15 each, two SATA power splitters at 15 each, and cage mount 40, so an extra 170 on top, but not necessary if you’re starting small.

Talking about the lowest cost server build, power draw is a huge factor, and is where we screwed up. With GPU and 12 drives, the power on spike saw 430 watts, 325 booting up and 300 after a minute with the array running. The Intel 5520 chipset and dual Xeon X5650’s are power pigs, as with 45 watt GPU and drives off we hit 220 initial spike, 185 during boot and 173 getting into UnRaid. Insane.

We also botched the hard drive purchases, from these 12 NAS drives alone was 181 watts initial bump, 95 during boot and 82 into UnRaid’s array. Unfortunately these old hard drives gulp power, roughly 15 watts initially and 7 watts at idle. Compared to a new Seagate IronWolf 12TB NAS drive that powers on at 8 watts, and idles at 0.8 watts, an 88% energy saving. I realize UnRaid has a 15 minute to idle setting which we ran out of time to test, but the verdict is clear, buy new drives and save long term.

Anyways, without drives the dual Xeon system is pulling 173 watts in idle, and that’s crazy. A new system can go from 80 watts high end, to as low as 20 watts with minimal components and the right chipset. So the real difference is with old parts you save up front, new you save long term in your power bill. Well, I guess this’ll make an excellent doorstop? With a program like Allway Sync to backup data to another hard drive that’s BY FAR the cheapest option, but as you grow, you may need to think bigger.

If you have old hardware lying around or get cheap parts, try your hand at this- we learned a ton in the process and hope we helped you too. If you get your feet wet and like having a NAS, upgrading sooner will see the cost offset by the power savings.

Also a massive shout out to the forums at servethehome.com, members there helped with questions we had and upgraded info in the episode, thanks guys. If you decide to pick up some gear on Amazon, shopping through our affiliate links will help us here with no extra cost to you. And follow us on Twitter, Instagram and Facebook at techspinreview.

We seriously cut down this script, so we’re sure you have stuff and questions, join the discussion down below. Please take a second to hit Like, subscribe, the bell, and we often reply to your feedback so if you have a question, fire away. We’ll try to reply to hardware questions, but UnRaid software we’ll leave for the experts out there. We really appreciate you watching this far, thanks for your time, and tune in for part 2 of this guide. Bye for now!

See more NAS Builds, PC case, Monitor reviews